Introduction

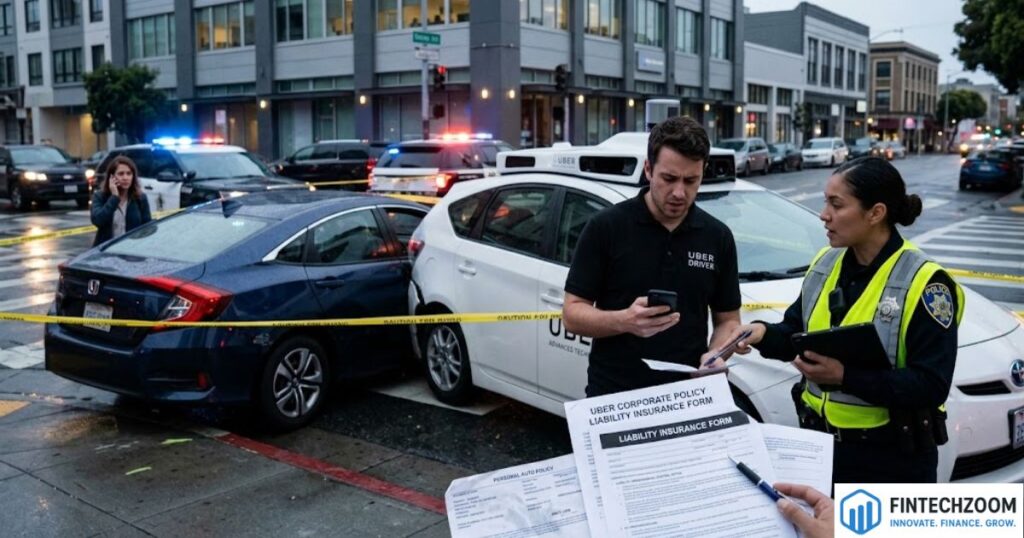

The intersection of artificial intelligence and public transportation has created a complex web of legal questions that current statutes are still racing to address. One of the most contentious scenarios involves instances where an uber self driving backup driver causes accident liability insurance complications. While the promise of autonomous technology is a drastic reduction in human error, the transition period requires human safety operators to remain behind the wheel. This creates a hybrid liability environment where the line between a software malfunction and human negligence becomes incredibly thin.

When these high-tech vehicles are involved in collisions, the immediate aftermath often triggers a multi-layered investigation involving federal safety boards, state regulators, and private insurance adjusters. The core of the issue lies in the “human-in-the-loop” requirement, where a person is tasked with monitoring a system that is designed to operate independently. This paradox of automation expecting a human to stay perfectly alert while the car does the work often leads to catastrophic results. Understanding how the law navigates these crashes is essential for passengers, pedestrians, and legal professionals alike.

The Role of Human Oversight in Autonomous Testing

The primary function of a safety operator is to serve as the final fail-safe for an experimental system. These individuals are trained to intervene the moment the vehicle’s sensors fail to recognize a hazard or when the onboard computer makes a processing error. However, cognitive studies suggest that maintaining high levels of vigilance is difficult when the primary task is passive monitoring. When an intervention is missed, the legal system must determine if the failure was a reasonable human limitation or a clear act of negligence.

In many jurisdictions, the backup driver is legally considered the operator of the vehicle, regardless of whether the autonomous mode is engaged. This means they are held to the same standard of care as any other motorist on the road. If they are distracted by a mobile device or fail to keep their eyes on the road, they can face personal liability and even criminal charges. The expectation is that the human will override the machine at any sign of danger, making their role both a technical necessity and a significant legal vulnerability.

Determining Fault in Hybrid Driving Environments

Assigning blame in a crash involving an autonomous fleet requires a deep dive into the vehicle’s data logs. Investigators look at “disengagement” data, which shows exactly when the computer handed control back to the human or when the human took over manually. If the system provided a sufficient warning and the operator failed to react, the fault typically rests with the individual. Conversely, if the system failed silently without alerting the operator, the focus shifts toward the developer of the software.

This “split-second” analysis is what makes these cases so complex for the courts. Unlike standard car accidents where witness testimony and skid marks tell the story, autonomous crashes rely on petabytes of sensor data and code execution logs. Legal teams must often hire specialized forensic engineers to interpret whether the vehicle’s LIDAR, radar, or cameras accurately perceived the environment. The result is often a “comparative negligence” ruling, where fault is divided between the human driver and the corporation managing the technology.

Commercial Insurance Tiers for Rideshare Platforms

Insurance coverage for these vehicles typically operates on a tiered system based on the status of the app. When the vehicle is in “testing mode” without a passenger, the coverage is often provided through a specific commercial policy held by the autonomous vehicle developer. However, the moment the vehicle is used to provide a service to the public, the insurance requirements jump significantly. Most states require a primary liability policy of at least $1 million for active rides involving passengers.

The complexity increases when the backup driver is an employee or an independent contractor. Under the legal doctrine of respondeat superior, an employer is generally responsible for the actions of its employees while they are on the clock. This means that if a safety driver causes a crash, the parent company’s commercial insurance should be the primary source of recovery for the victims. This deep-pocketed coverage is designed to ensure that victims of high-tech accidents are not left without recourse due to the experimental nature of the transport.

Product Liability Versus Personal Negligence

A major shift in the legal landscape is the move toward product liability claims. In traditional accidents, you sue the driver. In autonomous accidents, you might sue the manufacturer. If a claimant can prove that the vehicle had a “design defect” such as a software algorithm that struggles to identify pedestrians at night then the manufacturer can be held strictly liable. This means the victim doesn’t necessarily have to prove the company was “careless,” only that the product was inherently dangerous.

However, companies often defend these claims by pointing back to the backup driver. They argue that the presence of a human safety operator is intended to mitigate any known or unknown defects in the software. This creates a circular argument where the company blames the driver for not fixing the machine’s mistake, while the driver blames the machine for creating the hazard. Navigating this tension requires a sophisticated understanding of both tort law and software engineering standards.

Impact of State and Federal Regulations

Currently, there is no single federal law that governs the liability of autonomous vehicles across the United States. Instead, a “patchwork” of state regulations exists. Some states, like Arizona and Florida, have been very permissive, allowing for rapid testing with minimal oversight. Others, like California, have implemented strict reporting requirements for every collision and disengagement. These local laws dictate everything from how much insurance a company must carry to who is listed on the police report as the “driver.”

New legislative efforts are beginning to focus on “black box” requirements, mandating that all autonomous vehicles carry data recorders that are accessible to law enforcement. This transparency is intended to prevent companies from hiding technical failures behind proprietary secrets. As the technology moves toward Level 4 and Level 5 autonomy where no human driver is required at all the laws will likely shift toward a purely manufacturer-based liability model, but for now, the human backup remains a central figure in the legal process.

Victim Rights and Compensation Paths

For a person injured by an autonomous vehicle, the path to compensation can be daunting. Because these cases often involve major tech corporations, the legal defense is usually aggressive. Victims are entitled to recover damages for medical expenses, lost wages, and pain and suffering, but they may face delays as the various parties argue over who is responsible. It is common for these cases to end in confidential settlements, which prevents a legal precedent from being set for future accidents.

One unique aspect for victims is the availability of high-resolution video and sensor data from the vehicle itself. Unlike a standard “he-said, she-said” accident, the autonomous vehicle usually has a 360-degree recording of the entire event. This evidence can be a powerful tool for the plaintiff, but only if it is preserved before the company can overwrite the data. Fast legal action to secure a “letter of spoliation” is often required to ensure this digital evidence is not lost or deleted.

Future Outlook for Autonomous Insurance

As the technology matures, the insurance industry is evolving from “personal” auto insurance to “product” and “cyber” insurance. In a future where humans rarely touch the steering wheel, the risk shifts from the individual’s driving record to the software’s safety record. Insurers are already beginning to price policies based on the specific version of the autonomous software being used, much like how software companies are rated for cybersecurity risks.

This shift will likely lead to a more streamlined claims process for the public. If a robotaxi causes a crash, there is no “at-fault” driver to argue with; the liability sits with the fleet operator. This could eventually lead to a “no-fault” system for autonomous transport, where victims are compensated quickly from a central fund, and the insurance companies subrogate the costs against the manufacturers in the background. Until that day arrives, the role of the backup driver remains the most significant variable in any liability calculation.

Liability Comparison Table

| Factor | Backup Driver Error | Software/System Defect |

| Primary Liability | Individual & Employer | Manufacturer / Developer |

| Evidence Source | Eyewitness / Dashcam | Sensor Logs / Code Audit |

| Legal Standard | Negligence | Product Liability / Strict Liability |

| Insurance Layer | Commercial Auto Policy | Product Liability Policy |

| Typical Defense | “System failed to alert” | “Driver was distracted” |

FAQs

Can an Uber backup driver be sued personally?

Yes, if the driver was negligent such as being distracted by a phone or failing to monitor the road they can be named as a defendant in a lawsuit. However, the company’s insurance usually covers the damages.

What happens if the car was in “self-driving” mode during the crash?

The company may still be liable for a system failure, but the backup driver is still expected to intervene. Liability is often shared between the driver and the tech provider.

Does Uber’s $1 million insurance always apply?

The $1 million policy typically applies when a passenger is in the vehicle or the driver is en route to a passenger. If the vehicle is in testing mode without a passenger, different commercial limits apply.

Conclusion

The evolution of autonomous transport is one of the most significant technological leaps of the century, but it brings with it a unique set of legal hurdles. Cases involving an uber self driving backup driver causes accident liability insurance disputes highlight the friction between human limitations and machine capabilities. While the goal of these programs is to eliminate the 94% of accidents caused by human error, the “safety driver” remains a point of failure that the legal system is still learning to categorize.

For the industry to move forward, there must be a balance between encouraging innovation and protecting the public. Clearer federal guidelines and standardized data-sharing protocols would go a long way in simplifying the aftermath of these collisions. Until the technology reaches a point of total autonomy, the human behind the wheel and the insurance policies backing them will continue to be the primary focus of accident litigation. As a consumer or a participant in the sharing economy, staying informed about these changing liability standards is the best way to ensure your rights are protected in a world of self-driving machines.